Style-based Drum Synthesis with GAN Inversion

October 10, 2021

Drysdale, J. and Tomczak, M. and J. Hockman. 2021. Style-based Drum Synthesis with GAN Inversion. In Extended Abstracts for the Late-Breaking Demo Sessions of the 22nd International Society for Music Information Retrieval Conference, Online. [paper, poster]

This blog post contains the supplementary material accompanying the late-breaking demo: “Style-based Drum Synthesis with GAN Inversion” for the International Society for Music Information Retrieval (ISMIR).

Abstract

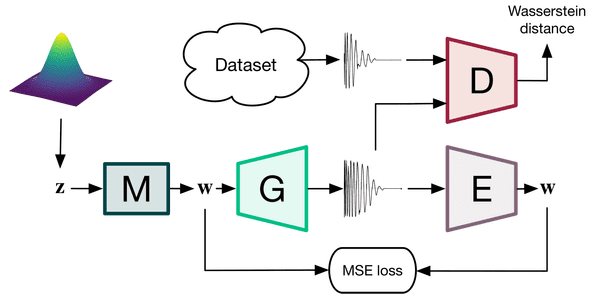

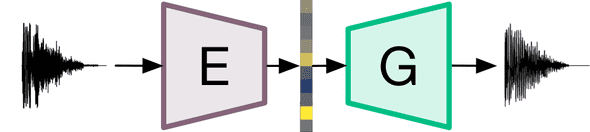

Neural audio synthesizers exploit deep learning as an alternative to traditional synthesizers that generate audio from hand-designed components such as oscillators and wavetables. For a neural audio synthesizer to be applicable to music creation, meaningful control over the output is essential. This paper provides an overview of an unsupervised approach to deriving useful feature controls learned by a generative model. A system for generation and transformation of drum samples using a style-based generative adversarial network (GAN) is proposed. The system provides functional control of style features of drum sounds based on principal component analysis (PCA) applied to the latent space. Additionally, we propose the use of an encoder trained to invert input drum sounds back to the latent space of the pre-trained GAN. We experiment with three modes of control and provide audio results on a supporting website.

Code

The GitHub repository for this project is available here. The repo contains instructions for installation and usage for a TensorFlow implementation of the style-based drum synthesiser and audio inversion network.

Audio Examples

Training Data Vs Generations

An comparison between: (left) a random selection of some examples from the dataset used in training and, (right) a random selection of drum sound generations.

Audio Inversion Network

An A-B comparsion of encoding audio input (A) with the audio inversion network and drum sound generations (B) with the inverted latent code. (Left) the audio input and, (right) the corresponding generation.

Additionally, the examples below demonstrate the systems capacity to generate drum sounds from alternative audio inputs such as beatboxing and sliced breakbeats.

Usage demonstration

Example usage within loop-based electronic music compositions. The percussive elements of the following tracks were created using a selection of samples from the generated data. A light amount of post-processing (equalisation and volume envelope shaping) was applied to mix the sounds.

Some more examples can be found here: https://soundcloud.com/beatsbygan

Interpolation demonstration

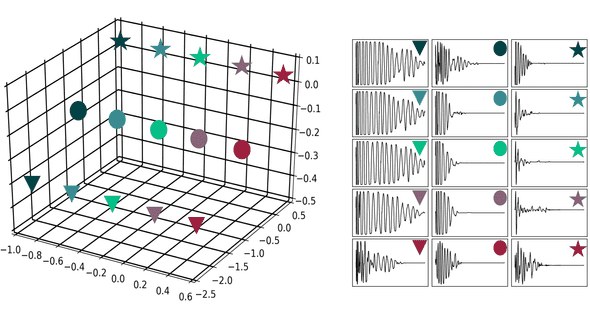

The proposed system learns to map points in the latent space to the generated waveforms. The structure of the latent space can be explored by interpolating between points in the space.

Figure 2: Interpolation in the latent space for kick drum generation. Kick drums are generated for each point along linear pathsthrough the latent space (left). Paths are colour coded and subsequent generated audio appears across rows (right).

A to B interpolation

In the following examples, two generated drum samples are selected and their latent vectors are noted. A linear path of 30 steps between each latent vector is created and a waveform is generated for each of those 30 steps.

Interpolating between Snare A and Snare B.

Interpolating between Kick A and Kick B.

Interpolating between Cymbal A and Cymbal B.

References

@inproceedings{drysdale2021sds,

title={Style-based Drum Synthesis with GAN Inversion},

author={Drysdale, Jake and Tomczak, Maciej and Hockman, Jason},

booktitle = {Extended Abstracts for the Late-Breaking Demo Sessions of the 22nd

International Society for Music Information Retrieval (ISMIR) Conference.},

year={2021}

}